2 April 2026

OpenClaw and the Move Towards More Structured AI Work

Exploring how AI tools are evolving from simple chat interfaces into structured systems that support repeatable workflows and practical knowledge work.

As AI tools continue to evolve, one of the more interesting shifts is the move away from isolated chat interactions and towards more structured, repeatable workflows. In recent AILC exploration, OpenClaw stood out as a useful example of this direction.

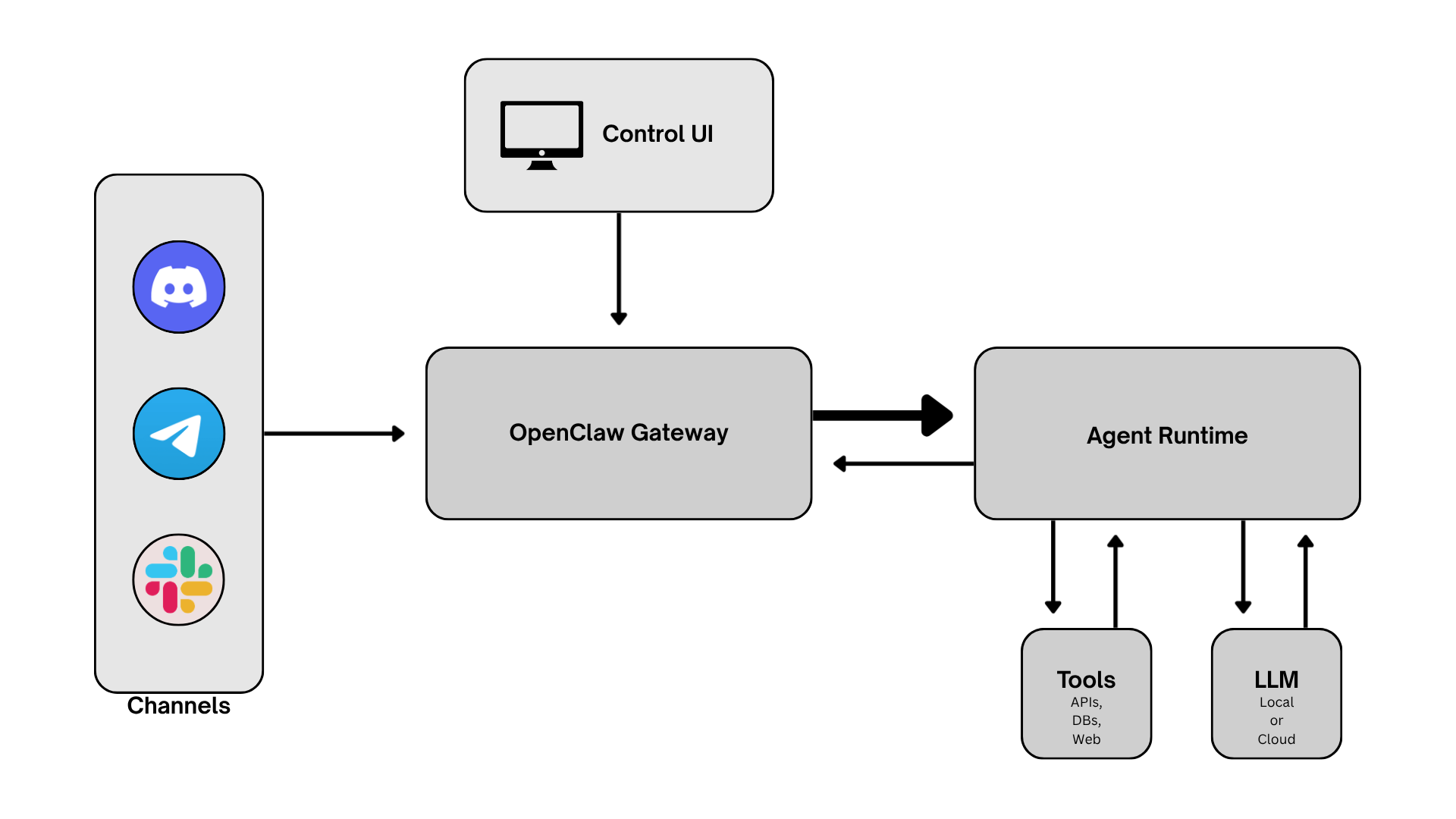

OpenClaw is a self-hosted AI assistant platform designed to support more than one-off question-and-answer exchanges. Instead of treating every interaction as a fresh start, platforms of this kind can maintain context, connect to tools, and support multi-step tasks that develop over time. That makes them particularly relevant in work settings where the goal is not simply to get an answer, but to move from raw inputs to something more organised and usable.

Why this matters

The significance of this kind of platform lies less in novelty and more in the type of work it can support. Systems such as OpenClaw are most useful when information needs to be gathered, filtered, summarised, and shaped into a draft, digest, or structured first-pass output.

This reflects a broader and more practical direction in AI use. Rather than focusing only on conversation, the emphasis shifts towards process support: helping users handle recurring tasks, reduce manual repetition, and create more consistent outputs that can then be reviewed and refined by a person.

Where it can be useful

This is where the model becomes more convincing in practice. A platform like OpenClaw can be valuable when there is a defined task, a selected set of sources, and a clear output format.

In AILC’s exploration, the focus was not on handing full autonomy to an AI agent. Instead, the interest was in testing how such a platform could support structured information workflows. That included a research-assistant style setup for reviewing selected AI-related sources, summarising updates, and producing digest-style outputs for human review. The project incorporated this flow into a daily pipeline that collected information from chosen websites, used earlier summaries as context, and generated structured briefing outputs.

For this kind of work, the platform is far better suited to controlled, repeatable tasks than to broad “do everything for me” expectations.

Key benefits

One of the clearest benefits is structure. Instead of starting from zero every time, users can work within a more repeatable process.

Continuity is another strength. For recurring tasks, previous outputs and ongoing context matter. A system that can support this kind of continuity may be more useful than a tool built only for isolated prompts.

There is also a practical advantage in reducing first-pass workload. In research-heavy or reporting-heavy work, AI is often most useful not as a final decision-maker, but as a support layer that helps gather, condense, and organise material so that people can review it more efficiently.

Important limitations

At the same time, the limits are just as important as the benefits.

First, systems like this still depend heavily on scope. They work best when tasks are narrow, sources are selected carefully, and the expected output is clearly defined. Without that structure, the quality and reliability of results become harder to assess.

Second, drafting and organising information should not be confused with independent judgement. Platforms like OpenClaw can support early-stage knowledge work, but human review remains essential, particularly where accuracy, relevance, and interpretation matter.

Third, self-hosting comes with an infrastructure cost. Because OpenClaw is designed to run on a user’s own device or server rather than as a fully managed hosted service, it requires dedicated resources, setup effort, and ongoing maintenance. In controlled internal environments this can be a worthwhile trade-off, but it is still an important consideration.

Why AILC found it worth exploring

OpenClaw is not only a platform under review. It also reflects a broader shift in AI: the move away from isolated question-and-answer interactions and towards systems that can manage context, connect to tools, and support more repeatable forms of knowledge work.

That is what makes platforms like this worth attention. They point to a more practical use of AI, where the value comes not just from generating answers, but from helping organise information, support structured processes, and produce more usable first-pass outputs.

For AILC, this is where the interest lies: understanding what these systems are, where they can genuinely support useful work, where their limitations become clear, and how they may fit into more responsible and practical forms of applied AI over time.